Cerebro : AI‑Driven Crypto Portfolio Platform

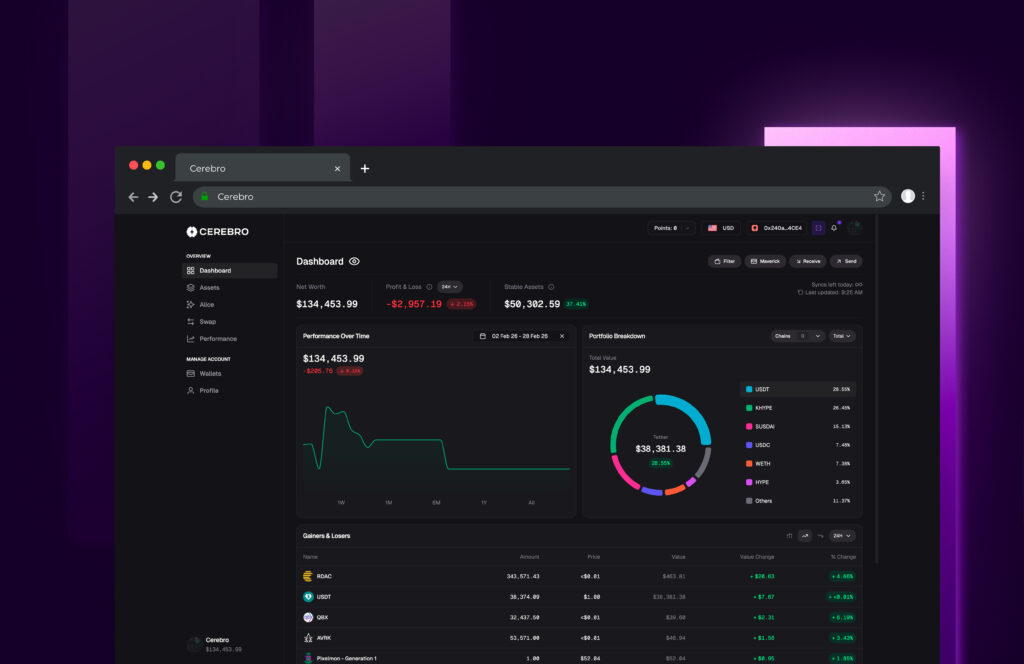

Multi‑agent portfolio intelligence with clustering‑based allocation, risk controls (VaR, drawdown), and conversational analytics—designed for retail and pro users.

About the project

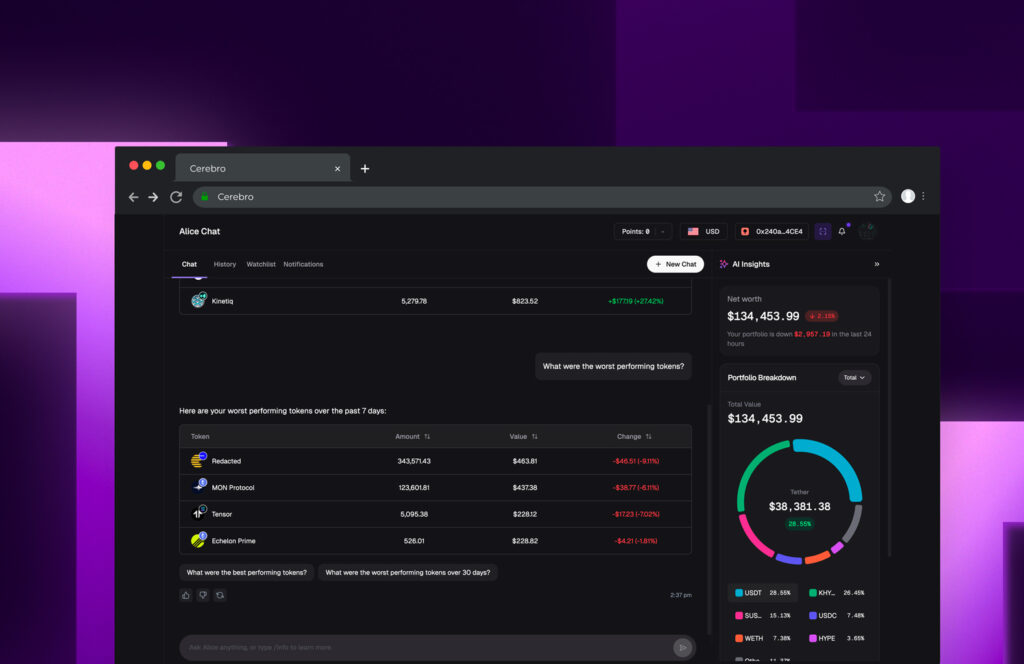

Cerebro helps users construct, analyze, and monitor crypto portfolios using machine learning and an LLM‑powered assistant (“Alice”).

The system clusters thousands of tokens into 8 categories and tailors allocations to user risk profiles from Ultra Conservative to Ultra Aggressive.

Cerebro

Crypto Analytics & Portfolio Management

Dedicated Team: AI/ML, Backend, Frontend, Data, DevOps

LangGraph, Python, Node.js, Next.js, MongoDB, PostgreSQL, Redis, Pinecone, Modal, Verce

Challenges

Signal quality

Many data sources but low signal‑to‑noise; needed robust features and evaluation.

Explainability

Users must trust recommendations with clear rationales and risk context.

Latency & scale

Fast responses over large historical datasets and minute‑level price feeds.

Security & privacy

Protect wallet addresses, balances, and preferences end‑to‑end.

Our solution

KVY TECH built a modular, explainable portfolio engine and LLM agent that couples ML signals with clear risk policies. The architecture supports rapid experimentation and production reliability.

Clustering‑based allocation

8 asset clusters (Steady, Momentum, Liquidity Leaders, etc.) guide diversified portfolios per risk tier.

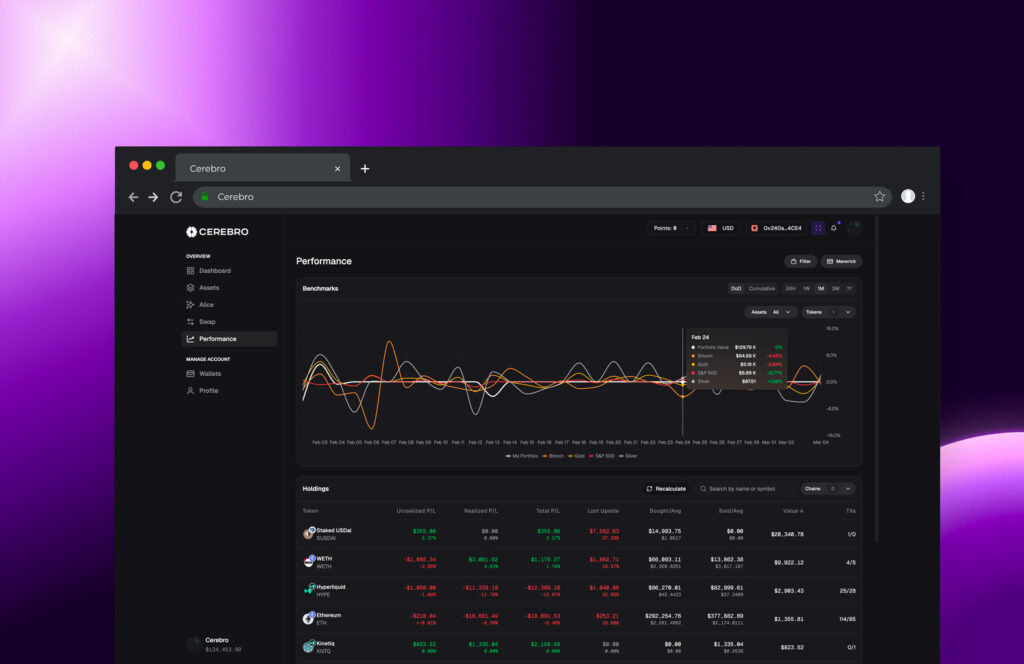

Backtesting & metrics

Evaluate returns, volatility, Sharpe/Sortino, and max drawdown vs BTC benchmark.

Real‑time alerts

ML‑triggered notifications for threshold breaches, drift, or market regimes.

Conversational analytics

Alice explains rebalancing impact, token substitutions, and defensive tilts (MS1–MS9).

Data platform

Minute‑level price ingestion (CMC & others), feature store, vectors for semantic retrieval.

Security layer

Prompt sanitation, masking, app‑layer encryption, RBAC, and privacy‑first logging.

Technologies used

High-level architecture

Agent

LangGraph (ReAct loop) with tools for data retrieval, optimization, and alert rules.

Data

MongoDB for prices & analytics; PostgreSQL for relational entities; Pinecone for vector search.

Compute

Python + Modal for jobs; Node.js/Next.js API for app; Redis for queues & caching.

Backtesting

bt library with benchmark comparisons and reporting.

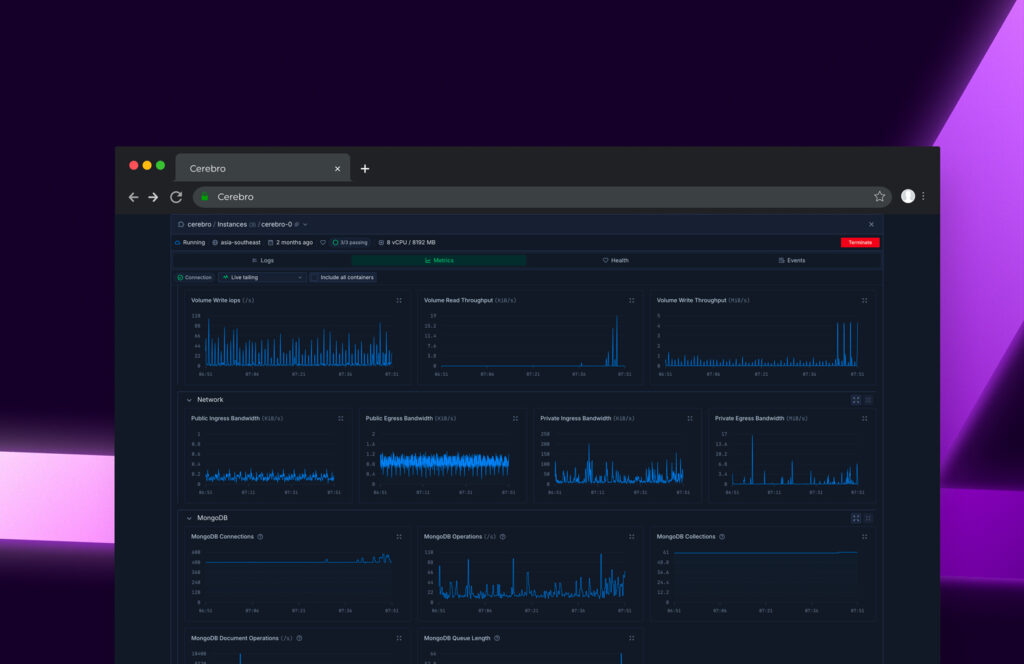

Observability

LangSmith traces for conversations; centralized logs/metrics/errors.

Deployment

LangGraph Cloud for agents; Vercel for web; containerized workers for schedulers.

Key capabilities

Risk‑aware portfolios

Uses VaR, drawdown limits, and cluster caps aligned to user risk profile.

Editable suggestions

Users can swap tokens/categories; engine re‑evaluates risk & returns instantly.

Scenario Q&A

Ask: impact of trimming to 5 tokens, defensive shift, BTC correlation, crash scenarios.

Virtual portfolios

Create, save, and compare multiple what‑if allocations with donut charts & KPIs.

Security & privacy

Application‑layer encryption (AES‑GCM/Fernet/Tink) for sensitive fields (wallets, balances).

Prompt sanitization & masking for LLM I/O (LLM Guard) and prompt‑injection defenses (Rebuff).

Privacy‑first logging with hashing & redaction; scoped JWTs and row‑level security where applicable.

Runtime moderation and evaluation tests (Giskard) to prevent data leakage and regressions.

Note: Quantitative KPIs (Sharpe uplift, alert latency) can be added post‑production analytics.

Results and impact

Explainable suggestions

Alice cites drivers, constraints, and trade‑offs for each portfolio change.

Faster iteration

Modular agents & data layer enabled rapid feature experiments and A/Bs.

Production reliability

Cloud‑hosted agents with observability reduced incident MTTR and flakes.

User confidence

Risk rules & KPIs improved trust vs opaque black‑box signals.

Need an explainable

AI portfolio engine?

We build reliable, auditable ML + LLM systems that users trust.